Why Performance Regression Detection Belongs in CI/CD

Most teams already automate unit tests, API checks, and security scans in their pipelines, but performance validation is often left behind until staging or production. That gap is expensive. A small code change, dependency update, schema migration, or infrastructure tweak can quietly add latency, reduce throughput, or increase CPU and memory consumption long before anyone notices.

AI-powered performance regression detection closes that gap by continuously learning what normal looks like for a service, build, or workload, then flagging deviations early. Instead of relying only on fixed thresholds such as “p95 latency must stay under 500 ms,” the pipeline can compare each run against a baseline profile, account for natural noise, and surface anomalies that deserve investigation.

For QA and performance engineers, this is a major shift. You are no longer just collecting metrics after the fact. You are creating a feedback loop that helps developers catch regressions at pull request time, during nightly builds, or after every deployment to a test environment.

What AI Adds Beyond Traditional Thresholds

Traditional performance gating works best when the system is stable, the workload is predictable, and the acceptable limits are known in advance. In practice, test environments drift, traffic patterns vary, and microservices interact in ways that make rigid thresholds noisy or brittle.

AI helps by introducing pattern recognition and context. It can learn from historical test results and identify signals that are hard to capture with static rules alone.

- Adaptive baselines: Compare a new run to similar prior runs instead of a single fixed limit.

- Noise reduction: Separate expected variability from meaningful degradation.

- Multi-metric correlation: Detect combined changes in latency, error rate, CPU, GC pauses, and memory pressure.

- Anomaly scoring: Prioritize regressions by severity and confidence.

- Trend awareness: Identify slow drift before it becomes a user-visible incident.

This makes AI especially useful for large test suites where multiple services, environments, and workloads produce a lot of telemetry but very little time to manually analyze it.

Designing a Practical Detection Strategy

A good performance regression strategy starts with clear questions: What are we protecting? Which user journeys matter most? What metrics represent “bad enough” to block a release? The answer should be aligned with business risk, not just benchmark curiosity.

Before introducing machine learning, establish the performance signals that your pipeline will collect consistently. Typical signals include:

- Response time percentiles such as p50, p95, and p99

- Throughput, request rate, and concurrency

- Error rates and timeouts

- CPU, memory, disk, and network usage

- Garbage collection pauses and thread contention

- Service-level indicators such as queue depth or saturation

Once those signals are stable, you can create a baseline profiling layer that learns normal behavior across builds, branches, and environments.

Baseline Profiling in Practice

Baseline profiling is the foundation of AI-powered regression detection. A baseline is not just one number; it is a model of expected behavior under a defined workload and environment. That model should capture:

- Workload shape: login flow, search, checkout, report generation, or batch processing

- Environment context: container size, instance type, warm vs. cold cache, database state

- Build metadata: commit SHA, branch, dependency versions, feature flags

- Historical variance: acceptable spread across repeated runs

A practical baseline is usually built from several prior runs, not one golden run. This helps reduce false positives caused by transient cloud noise or test-data quirks.

How Machine Learning Detects Regression Patterns

You do not need an advanced research platform to get value from machine learning. In most CI/CD environments, relatively simple models can provide strong results when combined with good feature engineering and disciplined test data.

Common approaches include:

- Statistical anomaly detection: Z-score, percentile bands, or rolling standard deviation

- Time-series forecasting: Predict expected metric values and measure residual error

- Clustering: Group runs by workload and environment similarity before comparison

- Classification: Predict whether a run is likely to be regressed based on metric patterns

- Change-point detection: Detect when the metric distribution shifts after a code change

In performance testing, the best results often come from hybrid systems. For example, an anomaly detector may flag a suspicious run, while a rules layer checks whether the same build also exceeded a known SLA or produced error spikes. This combination keeps the signal useful for QA triage.

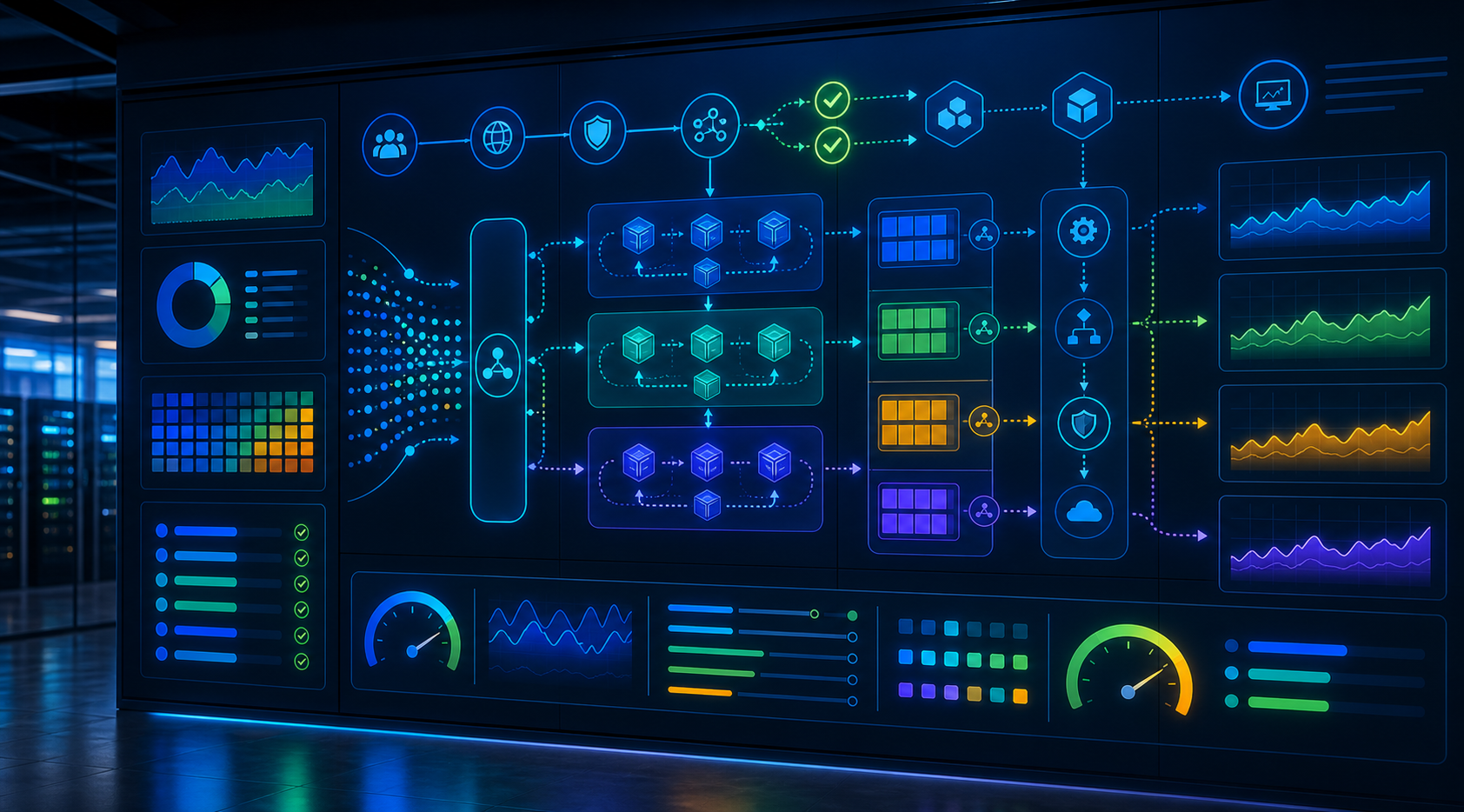

Where to Place Detection in the Pipeline

Performance regression detection can run at several points in the delivery flow, and the right placement depends on test cost, runtime, and release risk.

- Pull request stage: Run lightweight performance smoke checks on critical paths.

- Build verification stage: Execute short repeatable benchmarks against a stable environment.

- Nightly stage: Run broader load testing and model comparison on more realistic workloads.

- Pre-release stage: Perform stress testing, soak testing, and final benchmark validation.

- Post-deploy stage: Compare canary results against the learned baseline and production telemetry.

Not every pipeline needs every stage. Many teams start with one or two high-value checks, then expand once they trust the signal and automation.

Example: A Simple Regression Scoring Step

The following example shows a lightweight JavaScript-based scoring approach that compares a new run against a learned baseline and marks suspicious changes. In real systems, the model might live in a separate service, but this kind of logic is useful for understanding the mechanics.

function scorePerformanceRun(current, baseline) {

const metrics = [

{ name: 'p95Latency', higherIsWorse: true, weight: 0.45 },

{ name: 'errorRate', higherIsWorse: true, weight: 0.25 },

{ name: 'cpuUsage', higherIsWorse: true, weight: 0.15 },

{ name: 'throughput', higherIsWorse: false, weight: 0.15 }

];

let score = 0;

const findings = [];

for (const metric of metrics) {

const base = baseline[metric.name];

const value = current[metric.name];

const deltaPct = metric.higherIsWorse

? ((value - base) / base) * 100

: ((base - value) / base) * 100;

if (deltaPct > 8) {

score += deltaPct * metric.weight;

findings.push({ metric: metric.name, deltaPct: deltaPct.toFixed(2) });

}

}

return {

regressed: score >= 5,

score: Number(score.toFixed(2)),

findings

};

}

This example is intentionally simple. In a mature pipeline, the baseline might be normalized by environment, load profile, and confidence interval, and the output would likely feed an alerting or quality-gate system.

Building a Reliable Baseline Dataset

AI models are only as good as the data they learn from. That means baseline quality is a QA concern, not just a data science concern.

To create a reliable dataset:

- Run the same workload multiple times under controlled conditions.

- Record test duration, warm-up phase, and sample size.

- Capture environment metadata alongside metrics.

- Exclude runs with known infrastructure incidents or test-data corruption.

- Version the baseline so you can trace when the normal profile changed.

It is also important to separate functional changes from performance changes. A new feature may legitimately increase latency because it does more work. In that case, the regression detector should either compare against a new baseline or classify the behavior as an expected shift, not a failure.

What Makes a Good Alert

Performance alerts are useful only if they are actionable. If every build is flagged, the team will ignore the system. A good alert should answer three questions:

- What changed? Which metric or user journey deviated from baseline?

- How bad is it? What is the severity, confidence, and estimated impact?

- What should we inspect next? Which commit, component, or dependency is the likely cause?

In practice, this means your detection output should include summary context such as:

- Build number and commit SHA

- Affected service or endpoint

- Baseline version used for comparison

- Top contributing metrics

- Relevant logs, traces, or dashboards for triage

The faster the QA team can move from detection to diagnosis, the more value the system delivers.

Common Pitfalls to Avoid

AI does not eliminate the classic failure modes of performance testing. It can actually amplify them if the underlying process is weak.

- Unstable environments: If test infrastructure changes constantly, the model will learn noise.

- Inconsistent workloads: If each run exercises different data or traffic, comparisons become unreliable.

- Overfitting to one baseline: A single benchmark snapshot is not enough for robust detection.

- Ignoring warm-up effects: JIT compilation, cache warming, and pool initialization can distort early metrics.

- Too many alerts: Overly sensitive models reduce trust and slow delivery.

QA teams should treat AI as a decision-support layer, not a replacement for careful test design. Clear workload definitions, controlled test data, and reproducible environments remain essential.

A QA-Focused Implementation Roadmap

If your team is starting from zero, the safest approach is incremental.

- Choose one critical user journey. Start with a path that matters to business and support teams.

- Collect stable performance data. Run the same benchmark repeatedly across several builds.

- Establish a baseline profile. Include variation, not just averages.

- Add anomaly detection. Use a simple model or statistical method before adopting complex ML.

- Connect results to CI/CD gates. Fail or warn on severe regressions only.

- Feed triage data to developers. Link metrics, logs, and traces to the build report.

- Review and tune regularly. Revisit thresholds, baselines, and false positive rates each sprint.

This phased approach minimizes risk and helps the team build confidence in the signal. Over time, you can expand to more services, richer baselines, and smarter anomaly models.

Conclusion

AI-powered performance regression detection is most valuable when it is grounded in solid QA practices: repeatable workloads, reliable baselines, and actionable alerts. Used well, it turns performance testing into a continuous guardrail inside CI/CD, helping teams detect latency spikes, throughput drops, and resource inefficiencies before they become production problems.

The goal is not to replace human judgment. The goal is to give QA, developers, and release engineers a better early-warning system. When baseline profiling, machine learning, and pipeline automation work together, performance becomes a first-class quality signal rather than an afterthought.